I learnt how cool it is to train a robot with AI

As featured on mothership

Read original article at : https://mothership.sg/2021/06/dsta-brainhack-robot/

It’s one thing to hear about the advances in AI on the news, such as China’s creation of an artificial intelligence (AI) news anchor, robots making food deliveries, and serving you coffee.

It’s another matter however, when you are able to engage in a hands-on experience to see what can be done at the rudimentary level to build your own robot, especially if you’re a person who knows next to nothing about AI and such.

When I was asked to go for BrainHack’s Today I Learned (TIL), held by the Defence Science and Technology Agency (DSTA), I have to admit I was rather unsure at first as I have absolutely no knowledge about anything related to robotics or even coding.

Lau Wei Cheng, an AI engineer from DSTA’s Digital Hub, was my assigned mentor for the day and assured me that such a topic was not as inaccessible as I had feared.

In fact, what I learnt was that AI development has now reached the point where you can train your own AI model to recognise basic images and words, which you can then put into a robot bought off the shelf.

Photo by Matthias Ang

Photo by Matthias Ang

Training your robot to ”see”

If there is one thing that facial recognition, self-driving cars and deep fakes have in common, it’s that all three fields can depend on computer vision to achieve their primary purposes.

This simply means trying to make sense of the image, be it to copy a certain style and generate an image, as in the case of deep fakes, or avoid traffic and humans on the road, as in the case of self-driving cars.

AI engineer Lau Wei Cheng from DSTA’s Digital Hub introducing Computer Vision. Photo by Tan Jia Hwee

AI engineer Lau Wei Cheng from DSTA’s Digital Hub introducing Computer Vision. Photo by Tan Jia Hwee

And at the most basic level, it is about getting a robot to recognise the image of a dog.

Photo by Matthias Ang

Photo by Matthias Ang

So how is this done?

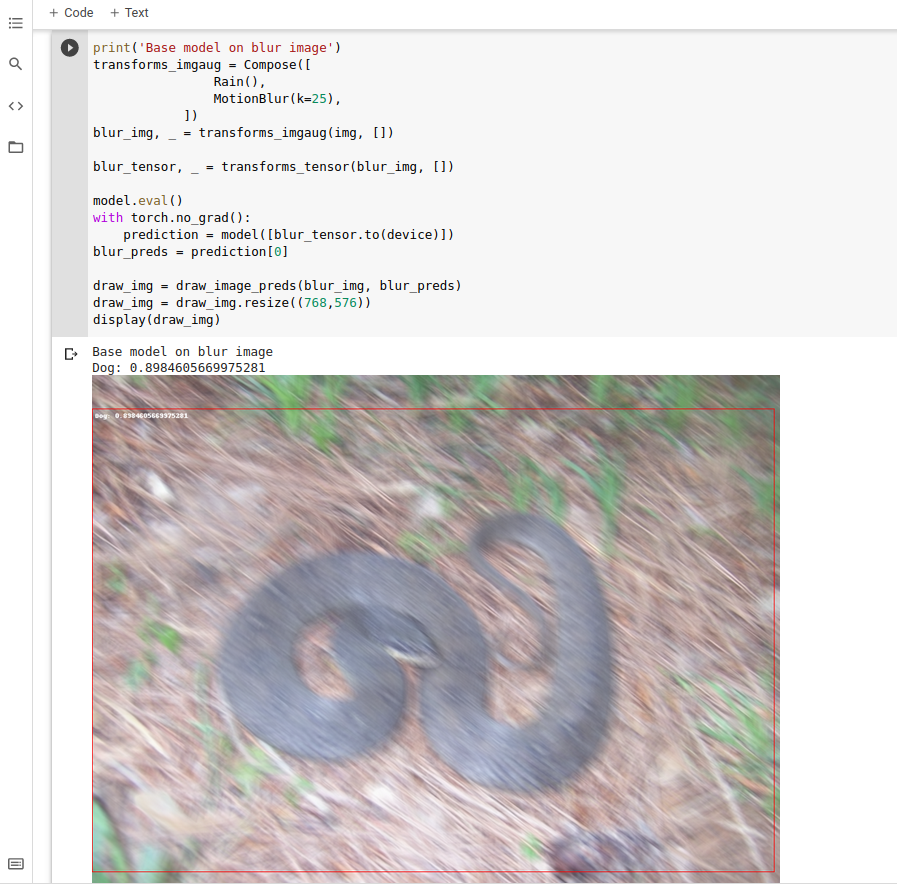

Take for instance, the following image with two dogs, as seen below:

Here, the AI model is essentially 99 per cent confident that both of the animals are dogs.

However, once it comes to dealing with blurred images, problems start to arise, with the AI model recognising the blurred snake as a “dog”, with 89 per cent confidence to boot.

Here then is where the real challenge in training an AI model to pick through such differences comes in.

According to Lau, if an AI model has only seen sharp images, that is all it will recognise. Showing blurred images to the AI model is therefore key to helping it differentiate between such images to recognise them, and fine-tuning it.

More importantly, the AI model must be constantly fed with a good mix of data, between both clear and blurred images.

Otherwise, he added, the AI model may eventually recognise only blurred images.

The AI can also recognise sounds

Then there is audio processing, which forms the basis of various features such as voice biometrics, smart home devices and transcription.

At the basic level, this means training the AI model to recognise single words such as numbers, animals and directions, and whether it can still detect these words accurately, with noise in the background.

However, within the field of audio processing, people may hide messages within the audio clips, which can be visualised as an image, said Lau.

Such an image, he added, is known as a spectrogram.

You can also learn these digital technologies and more at Brainhack

If you have no experience at all in coding or any of these geeky techs, fret not.

DSTA‘s BrainHack held on an annual basis allows students from secondary and Integrated Programme schools, Junior Colleges (JC), polytechnics, Institutes of Technical Education (ITE) and universities, to participate in various workshops and hackathons to learn about coding and AI, among other fields in digital technologies like cybersecurity, space, mobile app development and extended reality.

Online training and interactive sessions with mentors ensure that all BrainHack participants will be able to have a fruitful experience, whether they are beginners or seasoned techies.

According to Minister for Defence, Ng Eng Hen, this year’s BrainHack saw some 4,000 students explore digital tech in fields such as cybersecurity, artificial intelligence and fake propaganda.

https://www.facebook.com/SingaporeDSTA/posts/3018647155045819

You can find out more about what’s going on at this year’s BrainHack here and here.

This sponsored article made the author wonder when we might develop an omniscient mechanical entity.

Top photos by Matthias Ang